Introduction

CUDA was announced along with G80 in November 2006, released as a public beta in February 2007, and then finally hit the Version 1.0 milestone in June 2007 along with the launch of the G80-based Tesla solutions for the HPC market. Today, we look at the next stage in the CUDA/Tesla journey: GT200-based solutions, CUDA 2.0, and the overall state of NVIDIA's HPC business.

The article is divided in three parts: the technical & performance aspects, a chat with Andy Keane, General Manager of the GPU Computing group, and real-world applications & financial aspects.

GPGPU has come a very long way since the initial interest in the technology in 2003/2004, both in terms of software and hardware. For the former, there are now major applications throughout all main parts of the HPC market and there is a growing interest in using GPGPU in the consumer space for tasks such as video encoding, compression, physics processing, and much more. On the hardware side, many of the original limitations of GPU architectures have been removed and what remains is mostly related to the inherent trade-offs of massively threaded vector-like processors.

Similarly, CUDA has also come a long way. In many industries, we are still in the software development phase and large deployments are unimaginable; however, revenue will definitely still start rolling this year. Furthermore, the number of companies in an advanced phase of development seems to guarantee good momentum going into 2009 and 2010, generating a significant and hard to neglect head start against both AMD and Intel’s GPGPU solutions throughout the HPC market.

However, the original G80-based Tesla line-up does not seem to have generated an overwhelming amount of revenue, with many of the initial sales likely coming from companies prototyping the technology and some universities. Today’s announcement of a new generation of Tesla cards is thus especially significant, as it will likely represent the first GPGPU line-up shipping in large relative volumes.

Tesla 10-Series: What’s the Big Deal?

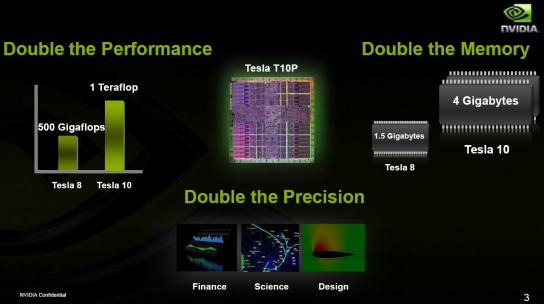

This slide is pretty much exactly what you would expect after reading our first GT200 article, with one major exception: 4GiB of memory. The original Tesla ‘only’ doubled the amount of video memory compared to what’s available on GeForce, from 768MiB to 1.5GiB. On the other hand, Tesla-10 quadrupled it from 1GiB to 4GiB based on customer feedback. In some markets, much of this memory will go wasted; in others, such as Oil & Gas, this is an absolutely huge deal and well worth the cost.

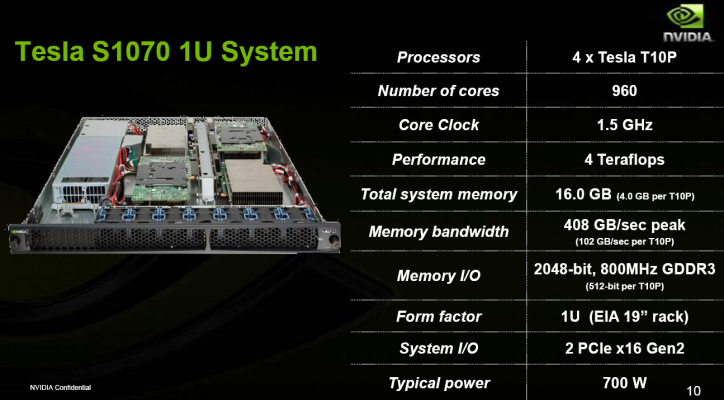

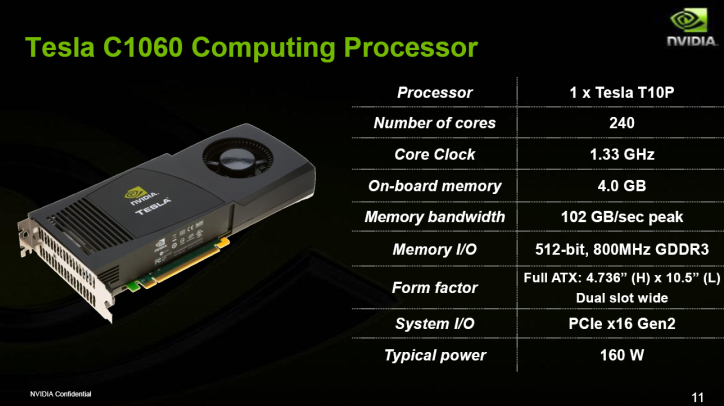

More specifically, NVIDIA announced two new products today: the Tesla S1070, a 4-GPU 1U server with a peak single-precision throughput of 4.32TFlops, and the Tesla C1060, a single-GPU board with a peak throughput of 960GFlops. Rather than summarize their characteristics, we’ll just save everyone some time and show you NVIDIA’s own slides:

The obviously missing element here is pricing. The original G80-based C870 and S870 were launched at $1300 and $12000 respectively. This time around, the relative pricing changes drastically: the C1060 will sell for $1700, but the S1070 will only cost $8000. There’s also no ‘deskside’ solution this time around, and that’s mostly because of the lower relative interest they’re seeing in the market. People would much rather put a board in a classic workstation or in a nearby data center. So that’s not a huge surprise, but why the big change in pricing scheme?

As Andy Keane told us (see: our chat/interview with him), the majority of their revenue this year will be for 1U servers. All of the high volume contracts they might get will likely be based on it. So it turns out that’s what their potential customers are going to use most to judge performance/dollar and, therefore, it would likely hurt overall CUDA/GPGPU adoption rates if they tried to extract excessive margins out of this part of the business. At this relatively early stage in the game, that could be a fatal mistake.

Now that we’ve got the basics out of the way, let’s take some time to examine the improvements in performance and feature-set more closely…