Other CUDA 2.0 Features

There have been many improvements to both the software and hardware side of things between the release of G80 and GT200. For example, G84/G86 added support for global memory atomics, and NVIDIA has recently released a visual profiler beta. Both are very big steps in the right direction, but we won’t look into these factors right now and will merely examine two of the new features in CUDA 2.0 instead.

The most significant hardware enhancement is support for shared memory atomics. One simple application of that would be counting the number of each character type in a string, as would be required for Huffman encoding during file compression for example (that’s not necessarily the best way to do it, it’s just an example). We were told performance would be ‘pretty good’ assuming there aren’t too many conflicts, so we’ll be curious to see how it behaves in the real-world. For what it’s worth, we definitely think that’s a potentially very useful feature.

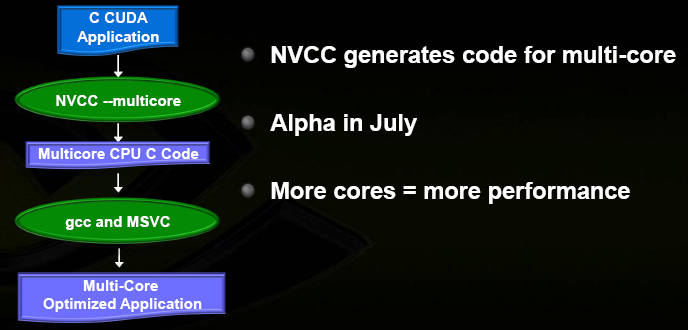

On the other side of the spectrum, one of the big new features in CUDA 2.0 has nothing to do with GPUs: you will now be able to compile CUDA code into highly efficient SSE-based multithreaded C code to run very efficiently on the CPU. That’s right, not just device emulation; the genuine goal is to make it run as fast as possible on Intel and AMD CPUs. In a way, that’s quite similar to Rapidmind.

There are a variety of reasons and consequences here, but the most interesting for Beyond3D readers might be what it means for game developers. Using CUDA in a game made no sense until now, because you would either be screwing up with Radeon users or have to write the code twice to run on the CPU too. But now, by writing scalable CUDA code for an advanced massively parallel weather system (rain, snow, dust storms, etc.) for example, you might also achieve better CPU performance than if you wrote yourself with much less extensive use of SSE. So suddenly, this becomes a very appealing approach.

One key advantage of the approach may also be that it is inherently very scalable to an ever-increasing number of CPU cores. Every CUDA ‘block’ is run in a separate CPU hardware thread (1 per core, or 2 with SMT). There is no possible form of communication between blocks in CUDA outside of global memory atomics, so synchronisation overhead is very low and doesn’t increase dramatically with many cores.

An interesting way to look at it is that CUDA forces you to restrict data dependencies to a very efficient subset based on shared memory; there is often an inherent base performance disadvantage to this, but it definitely has plenty of advantages and the model is very easy to understand compared to some of the alternatives (assuming the algorithm lends itself to such a reformulation). The generated C code is also fully exposed so you can learn from it or even try to improve it manually if you want to. As we said, this seems quite similar to Rapidmind’s solution.

We suspect, although we aren’t sure, that one reason why NVIDIA decided to bother is that this could make CUDA and data parallelism in general a much more credible paradigm in the view of the development and academic communities. If, despite all of its limitations, this model can achieve excellent performance even on multi-core CPUs then that’s a clear indication that perhaps it truly is a viable way forward. And unlike the other parallel programming models being researched today, this one is out there today and proven in hundreds of applications and roughly seven exaflops (a million teraflops!) of sustained aggregate hardware performance.

It is important to understand that such a realization wouldn’t just be good for NVIDIA; it would be good for everyone, including AMD and Intel. Anything that somehow helps to diminish the gravity of the ongoing multithreaded software crisis is a big step in the right direction for everyone involved in the world of computation.

Summary

While the Tesla 10-Series obviously isn’t anywhere near as disruptive as the original Tesla, it is nonetheless an impressive improvement for the HPC market and the software infrastructure has also clearly been moving in the right direction. Performance has gone up by 2-2.5x but pricing for the 1U solution actually went down, resulting in 3-3.5x higher performance/dollar and substantially higher performance/watt. Meanwhile, improvements in the CPU world have been much less drastic.

Furthermore, while double precision is slower than expected, the implementation is in no way ‘botched’ and it will be very easy to scale upwards in the future. And while 512-bit GDDR3 versus 256-bit GDDR5 is a complex trade-off in the consumer market, it’s a clear advantage here: we simply don’t see a way to get that much memory on the card otherwise. As for x86 CUDA, it’s hard to estimate what its impact will be (if any), but it’s certainly an interesting development.

But while this may all be quite impressive, there have been plenty of exciting designs in the past which have failed to live up to expectations; they never really got that any momentum and flopped financially. Given that the original Tesla hardly broke the sales records, we wondered just how far NVIDIA really was from making larger shipments and whether they still expected noticeable revenue this year. That’s why we poked Andy Keane over dinner at Editors’ Day and he was nice enough to answer this as well as a number of other questions, so don't miss the two other parts of the article...