Some Examples & Financial Aspects

Let’s begin by examining various CUDA applications in some of the wide variety of fields where it is being used today or in the near future. It’s certainly worth pointing out that the vast majority of these applications did not exist one year ago. Amusingly, many of the developers seem to have learned about CUDA by sheer chance, such as through a GeForce review when trying to decide which board to buy for their ‘casual gaming habit’ or in a presentation mentioning it along with other trends.

This reinforces another key point: momentum. Many of the applications of the future will be developed by engineers or scientists who learned about CUDA by discovering what others were doing with it. This form of feedback effect takes time to build up, but it’s pretty obvious GPGPU is now fully on the right track. For example, following the announcement of one or two CUDA-based Electronics Design Automation tools coming from startups, there is now big interest throughout the industry about the technology and how to apply it to a variety of problems. This trend also applies throughout CUDA and Tesla’s target markets, although it is obviously impossible to quantify objectively.

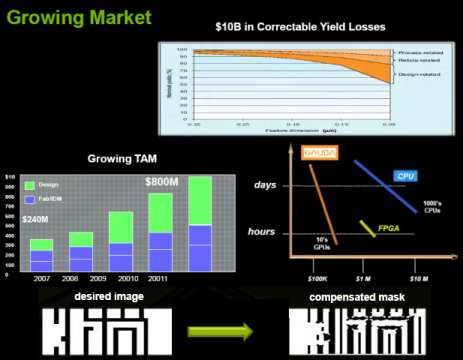

EDA/Semiconductor Industry: One of the first key applications for CUDA in the EDA industry was Gauda:

If the holy grail of artificial intelligence would be to have it design its own replacement, then surely the holy grail of GPUs has to be to render the ‘graphics’ (in the form of OASIS or GDSII) of the next generation of chips before they’re sent to the factory for tape-out. Gauda does ‘Semiconductor Optics Correction’ (aka optical proximity correction & verification), which is a process that has become required in the last several years on 180nm technology and below.

The basic idea is that lithographic equipment actually hasn’t fully kept pace with Moore’s Law, so the amount of precision available to ‘draw’ the transistors isn’t good at all. It is therefore necessary to compensate for potential problems by taking into consideration what is being drawn nearby (through far from trivial algorithms) and compensating to make sure connections that do exist are made and that no unintended ones may happen. This process has historically been done on CPU clusters, and some have been going down the FPGA route more recently, but Gauda has now proven that the GPU is by far the best commodity hardware available for this problem.

The main advantages of this speed-up outside of potentially lower costs is that it allows you to run more iterations (improving yields very slightly) while simultaneously reducing the cycle time for new chips by several days (and therefore the ‘time-to-money’, thus improving overall profitability). Another early EDA application for GPGPUs is OmegaSim GX from Nascentric, it accelerates ‘SPICE’ which is basically (in very loose terms) circuit simulation.

We won’t go into the details there, but it similarly allows for substantial time savings and, possibly more importantly, improved accuracy during the development process: previously, many companies were using ‘FastSPICE’ (a faster but less accurate version of the algorithm) most of the time and only running SPICE in time to get everything necessary simulated by tape-out. OmegaSim GX allows an 8x overall speedup (based mostly on a 40x speedup for transistor evaluation, which is the most time intensive operation). So that means you get practically optimal accuracy in the same time that you previously needed to just run the FastSPICE approximation with a substantial error margin. It’s a potentially rather disruptive change for that part of the design workflow. Interestingly, it might be possible to run more of the algorithm on the GPU now that double precision is supported, so we might see even larger speedups in the future.

Now that these two companies have proven the viability of GPGPU for EDA, major industry players (and the academic community) have taken note and many are pondering potential applications of CUDA to other parts of the EDA workflow. These things won’t happen overnight, but the current trends in the industry are definitely very promising. It won’t represent a billion dollar in revenue for NVIDIA, of course, but it could still sell a significant number of units given the number of semiconductor design teams in the world and how many Teslas (or other GPGPU solution) each might one day require.

Oil & Gas: As mentioned in the Andy Keane chat and at last year’s event through the Headwave presentation, the Oil & Gas industry remains a large opportunity that could fairly rapidly generate tens of millions of dollars in gross profit. We’re in the prototyping stage right now, with real solutions being ready and just needing to be proven and polished to go into mass deployment in the next 6 months and beyond.

The primary usage of high levels of computation here is for the discovery of new oil reserves and the drilling of new wells underwater, but as Andy said the high performance requirements related to the maintenance of existing wells (i.e. trying to extract more oil out it rather than leave it under the earth because it’s now economical to do so) could also become a significant revenue opportunity. It’s hard to predict the magnitude, but there are many large oil companies in the world and each might want a few 1000U+ clusters, so this could revenue a gross profit opportunity well in excess of a hundred million dollars. Note that this is our own estimation, and may or may not be equal to NVIDIA’s own internal forecasting.