Introduction

Back in early 2004, NVIDIA released their book on graphics programming: GPU Gems. While most of the various contributors focused on techniques aimed at next-generation 3D games, some were aimed at a very different audience. A couple of articles were focused on non-real-time rendering, and some of these techniques are likely used today in NVIDIA's Gelato product line.

In addition to that, the book ended with a section appropriately named "Beyond Triangles", with some miscellaneous chapters on things such as fluid dynamics, but also one on "Volume Rendering Techniques" and "3D Ultrasound Visualization". These two subjects, along with others such as image reconstruction, represent some of the workloads necessary in the highly lucrative visualisation industry.

These two areas, namely visualisation and near-time rendering (via Gelato, for example), represent the primary target markets for NVIDIA's Quadro Plex solutions. In a recent conference call, Jen-Hsun Huang said that they expect this opportunity to represent hundreds of millions of dollars of revenue in the future. In other words, a few innocent chapters in a 3 years old book now looks like a tremendous business opportunity.

Then, in GPU Gems 2 (released about one year later in April 2005), the number of chapters focused on near-time rendering techniques jumped tremendously. What's even more interesting, however, is that about 25% of the book's articles were focused on what NVIDIA called "General-Purpose Computation on GPUs". The world was already beginning to realize the potential performance-per-dollar, and performance-per-watt, advantage of GPUs for certain workloads.

One could argue that it is exclusively the growing popularity of websites such as GPGPU.org which pushed NVIDIA to consider the importance of this paradigm, and while this certainly must have played a role in their thinking, another significant factor cannot be ignored: NVIDIA already had a very clear idea of what their future architectural decisions would be for the DX10 timeframe. And they likely realised the tremendous potential of their architecture for GPGPU workloads.

Many of the key patents behind NVIDIA's unified shader core archiecture were filed in late 2003, including one by John Erik Lindholm and Simon Moy. Based on our questioning, the Lindholm was in fact the lead project engineer for G80's shader engine. Other related patents were filed later, including one on a 1.5GHz multi-purpose ALU in November 2004, by Ming Y. Siu and Stuart F. Oberman. The latter was also behind AMD's 3DNow! instruction set and contributed significantly to AMD's K6 and K7 FPUs.

Needless to say, it's been a number of years since NVIDIA realised they could have another signficant business opportunity based around more generalised GPU computing. What they needed, then, was a way to extend the market, while also simplifying the programming model and improving efficiency. They got a small team of hardware and software engineers dedicated specifically to that problem, and implemented both new hardware features and a new API to expose them: CUDA.

Today, NVIDIA is making the CUDA beta SDK available to the public, which means the fruits of their labour are about to leave the areas of mildly restrictive NDAs and arcane secrets. Of course, we've had access to CUDA for a certain amount of time now, and we've got the full scoop on how it all works and what it all means. So read on!

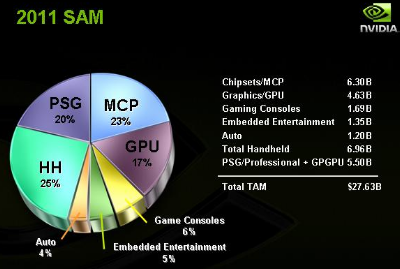

part of their addressable market in 2011 and beyond. Needless to say, that'd put a few new sets of

tyres on JHH's Ferrari.